I’m Hoang, but people call me “Taio”. I am member of team SRE in Accounting Department of Money Forward, Inc. since November 2021. I’m a tech geek so even if there’s an earthquake I’ll git commit … git push … before evacuating.

Note : There are different ways of call for nginx like NGINX, nginx or Nginx, but in this article i will use NGINX as the standard.

We all know that NGINX is a lightweight, high-performance web server designed for high-traffic use cases. It is used for: Serving the static contents, Load Balancing, Caching, Application Firewall, and Reverse Proxy. It’s designed to handle heavy loads so 20,000 hits per second is no big deal. Isn’t that great? But I want more and then I found OPENRESTY

What is Openresty?

OpenResty is a full-fledged web platform that integrates our enhanced version of the Nginx core, our enhanced version of LuaJIT, many carefully written Lua libraries, lots of high-quality 3rd-party NGINX modules, and most of their external dependencies. It is designed to help developers easily build scalable web applications, web services, and dynamic web gateways.

OPENRESTY is basically an improvement of NGINX. It transforms the NGINX server into a strong web application server, which the web designers can utilize the Lua programming language and Lua modules to develop very superior execution web applications that are able to deal with 10K ~ 1000K+ associations in a server.

Not just that it additionally means to run your server-side web application totally in the NGINX server, leveraging NGINX’s event model to do non-blocking I/O not only with the HTTP clients, but also with remote backends like MySQL, PostgreSQL, Memcached, and Redis.

Intall Openresty

To install Openresty all you need to install is on the website : https://openresty.org/en/installation.html

macOS

Basically, if you use macOS use Homebrew:

brew install openresty/brew/openresty

Linux distributions

OpenResty provides official pre-built packages for some of the common Linux distributions (Ubuntu, Debian, CentOS, RHEL, Fedora, OpenSUSE, Alpine, and Amazon Linux). Installation instructions are written in the link above.

Openresty provides opm (Openresty Package Manager), similar to Perl’s CPAN and NodeJS’s npm in rationale. Opm help us install a third-party Lua modular. From version 1.12.x opm was installed with Openresty. However, in some common Linux distribution opm is optional. For example Cenos7

# add the yum repo: wget https://openresty.org/package/centos/openresty.repo sudo mv openresty.repo /etc/yum.repos.d/ # update the yum index: sudo yum check-update

Then you can install a package openresty like this:

sudo yum install openresty -y

Don’t forget opm:

sudo yum install openresty-opm -y

Or you can download opm directly from github to the directory /usr/local/openresty/bin/:

wget https://raw.githubusercontent.com/openresty/opm/master/bin/opm

Then create symbolic link then grant permission with chmod

ln -s /usr/local/openresty/bin/opm /usr/bin/opm && chmod /usr/bin/opm 744

Now you can check opm with command

opm -h #help

Opm instructions are available at link

After the installation is complete, you can follow the instructions below to start Openresty: https://openresty.org/en/getting-started.html

If you’re familiar with NGINX configuration, it should look very familiar to you. OpenResty is just an enhanced version of NGINX using addon modules anyway. You can take advantage of all the existing goodies in the NGINX world.

Where is lua in NGINX ?

Lua is a scripting language .it is rarely used as a standalone programming language. Instead, it is used as a scripting language that can be integrated (embedded) into other programs written in mainly C and C++.

The Lua interpreter (also known as “Lua State” or “LuaJIT VM instance”) is shared across all the requests in a single NGINX worker process to minimize memory use. Request contexts are segregated using lightweight Lua coroutines.

Loaded Lua modules persist in the NGINX worker process level resulting in a small memory footprint in Lua even when under heavy loads.

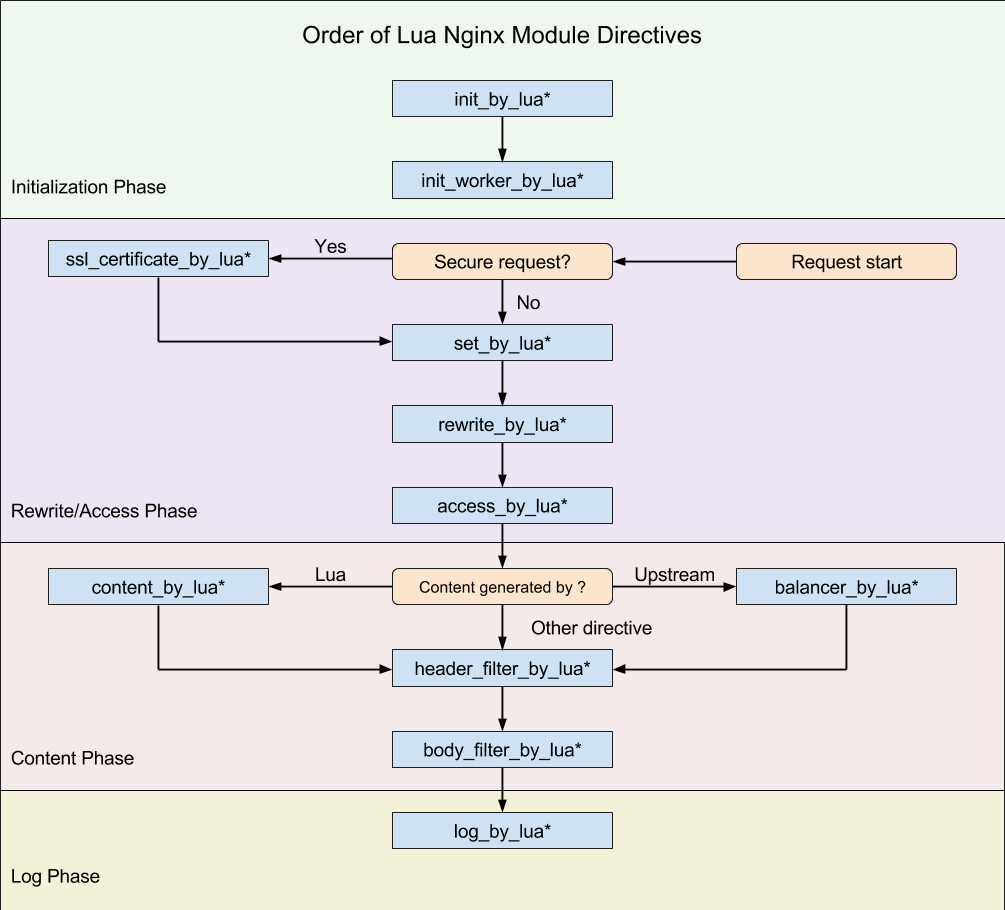

The basic building blocks of scripting NGINX with Lua are directives. Directives are used to specify when the user Lua code is run and how the result will be used. Below is a diagram showing the order in which directives are executed.

The general structure of Lua directive will be xxx_by_lua_yyy. Where xxx is the intended use and yyy is where the Lua code will be executed (file or block). The example below will help you understand better.

# nginx.conf

location /test {

default_type 'text/plain';

access_by_lua_block {

# -- check the client IP address is in our black list

if ngx.var.remote_addr == "132.5.72.3" then

ngx.exit(ngx.HTTP_FORBIDDEN)

end

# -- check if the URI contains bad words

if ngx.var.uri and

string.match(ngx.var.request_body, "evil")

then

return ngx.redirect("/terms_of_use.html")

end

# -- tests passed

}

content_by_lua_file /path/to/lua/app/root/$path.lua;

log_by_lua_block {

ngx.log(ngx.INFO, "Create some log here !")

}

}

When a request is sent to /test, the directives will be executed in the following order:

- access_by_lua_block: This line will check access in the code block below. First, check if the user’s IP is on the blacklist then check if URI contains bad words.

- content_by_lua_file: This line will handle response for request. However content will be inside lua file with the path as /path/to/lua/app/root/$path.lua;

- log_by_lua_block: This line will log the request processing after completion.

Using Package

One of the coolest things about using Openresty is users can use packages. For example, you want to count the number of requests to your server. Of course, you can count with the server’s log, but it is very expensive to save the log. The better solution is to use package knyar/nginx-lua-prometheus.

To globally install opm packages, just use:

sudo opm get knyar/nginx-lua-prometheus

To track request latency broken down by server name and request count broken down by server name and status, add the following to the http section of nginx.conf:

# nginx.conf

lua_shared_dict prometheus_metrics 10M;

lua_package_path "/usr/local/openresty/site/lualib/?.lua;;";

init_worker_by_lua_block {

prometheus = require("prometheus").init("prometheus_metrics")

metric_requests = prometheus:counter(

"nginx_http_requests_total", "Number of HTTP requests", {"host", "status"})

metric_latency = prometheus:histogram(

"nginx_http_request_duration_seconds", "HTTP request latency", {"host"})

metric_connections = prometheus:gauge(

"nginx_http_connections", "Number of HTTP connections", {"state"})

}

log_by_lua_block {

metric_requests:inc(1, {ngx.var.server_name, ngx.var.status:sub(1, 1) .. "XX"})

metric_latency:observe(tonumber(ngx.var.request_time), {ngx.var.server_name})

}

This:

- configures a shared dictionary for your metrics called

prometheus_metricswith a 10MB size limit; - path to lua package called lua_package_path. After install package by opm, all package will in

openresty/site/lualib.openresty/site/lualib/?.lua;;mean all .lua file in lualib - registers a counter called

nginx_http_requests_totalwith two labels:hostandstatus; - registers a histogram called

nginx_http_request_duration_secondswith one labelhost; - registers a gauge called

nginx_http_connectionswith one labelstate; - on each HTTP request measures its latency, recording it in the histogram and increments the counter, setting current server name as the

hostlabel and HTTP status code as thestatuslabel.

Last step is to configure a separate server that will expose the metrics.

# nginx.conf

server {

listen 9145;

location /metrics {

content_by_lua_block {

metric_connections:set(ngx.var.connections_reading, {"reading"})

metric_connections:set(ngx.var.connections_waiting, {"waiting"})

metric_connections:set(ngx.var.connections_writing, {"writing"})

prometheus:collect()

}

}

}

Metrics will be available at http://your.nginx:9145/metrics.

Conclusion

OpenResty is open-source software and not an Nginx fork. It is a higher-level application and gateway platform using Nginx as a component. It is designed to help developers easily build scalable web applications, web services, and dynamic web gateways.

References

- https://openresty.org/en/ ( last visited on 22/02/2022 )

- https://opm.openresty.org/ ( last visited on 22/02/2022 )

- https://github.com/knyar/nginx-lua-prometheus ( last visited on 22/02/2022 )

- https://github.com/openresty/lua-nginx-module#directives ( last visited on 22/02/2022 )

マネーフォワードでは、エンジニアを募集しています。 ご応募お待ちしています。

【サイトのご案内】 ■マネーフォワード採用サイト ■Wantedly ■福岡開発拠点 ■京都開発拠点

【プロダクトのご紹介】 ■お金の見える化サービス 『マネーフォワード ME』 iPhone,iPad Android

■ビジネス向けバックオフィス向け業務効率化ソリューション 『マネーフォワード クラウド』

■だれでも貯まって増える お金の体質改善サービス 『マネーフォワード おかねせんせい』